Pay-per-click ads via Google, Facebook and others are the means of choice for many companies to reach new customers.IntroductionDigital advertising is continually evolving: According to a study by Global Digital Ad Trends 2019, digital advertising almost single-handedly drove the increase in total media advertising spending worldwide last year. The development of … [Read more...] about 5 tips for more growth through paid advertising

Digital Marketing

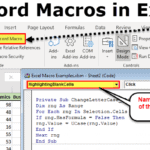

Excel: A comprehensive guide (not only) for marketers

Is Excel old fashioned? Not at all. The program is one of the most used planning tools in everyday office life. The fact that a community has built up around Excel shows that the program is still highly relevant - but also that it is not always understandable at first glance. We will show you the basics, tips, and tricks you should know about Excel in the … [Read more...] about Excel: A comprehensive guide (not only) for marketers

Looking for an SEO strategy to be more visible and increase your traffic?

You are on the right site. We tell you everything.However, I must warn you that an SEO strategy is not easy, glamorous, and fast.This is not the only bad news, and you have to work hard if you want results from your internet SEO strategy on Google!I know most people have left this article because any sign of hard work frightens … [Read more...] about Looking for an SEO strategy to be more visible and increase your traffic?